International development was revolutionized by experiments and evaluations of its methods. Meta-science can learn from it.

Science is the fundamental engine of economic growth and social progress. Accepting this naturally raises the question of what we – as a society – can do to accelerate science, and to direct science toward solving society’s most important problems.

While both governments and philanthropies invest heavily in supporting scientific research, we know relatively little about what investment strategies are most effective. Take sourcing – finding the projects and researchers to consider giving support to. Is it better to host open calls for applications or tap into a diverse network of scouts who make nominations? Which approach is most effective at attracting applications from younger researchers, those entering science from nontraditional life and career paths, and members of other traditionally underrepresented groups?

Subscribe for $100 to receive six beautiful issues per year.

Or consider the review stage. How should funders weigh the promise of a project versus the track record of the proposer in making funding decisions? Whose expertise should they solicit?

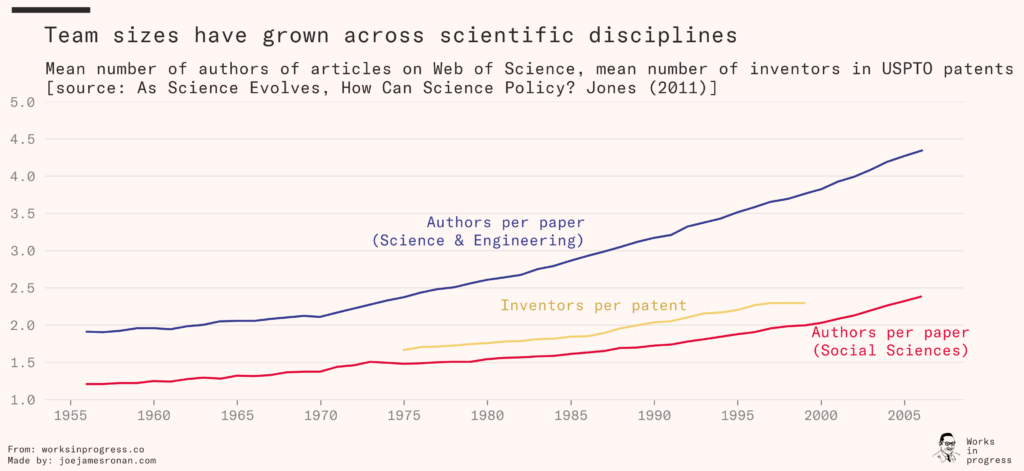

It is unlikely that there is a unique, ‘one size fits all’ answer to questions like these. After all, the structure of scientific research itself varies substantially across fields, and changes over time. For example, over the past several decades science has been shifting toward team production: Since at least 1955, average team sizes – across science and engineering journal publications, social sciences journal publications, and patents – have risen quickly, at rates of 15–20 percent per decade. As of 2005, more than half of the documents in each of these three measures of innovative activity had multiple authors or inventors.

Moreover, team-authored papers are four times more likely to be ‘home run’ papers, as measured by being cited at least 100 times. As Ben Jones has argued, team science being both increasingly common and high impact suggests we may want new ways to evaluate ideas and to recognize scientific contributions, different from traditional structures (such as tenure systems and research prizes) that are centered on individuals.

All of this calls for serious investment in science’s ability to improve itself, by researching how we support and structure science and how scientists themselves do research. It calls, in other words, for investment in the field of metascience – the science of doing science. This work will require developing measurement and feedback loops that allow us to observe – in a descriptive sense – the processes of science; to diagnose problems in a way that suggests potential solutions; to rigorously test and evaluate the efficacy of those potential solutions; and to support the rollout of solutions identified as effective in order to improve the productivity of science at a large scale.

We are writing as two academic economists that have witnessed the spread of similar scientific methods in other domains – particularly in the field of international development. This experience has much to teach us about the work needed to build a practice of metascience, and (ultimately) to accelerate science for social good.

Lessons from the field of international development

The two of us were drawn to the field of economics and inspired by how development economists were not just writing academic research papers, but rather were embedding rigorous research in real-world organizations, generating practical insights on how to improve – or even save – lives. Reflecting on the progress that has been made in the field of international development over the past few decades, we think there are two key lessons for metascience.

First, individual academics can make a real difference – through their own research, and through advising, mentoring, and teaching students.

The 2019 Nobel Prize in economics recognized three individual academics – Abhijit Banerjee, Esther Duflo, and Michael Kremer – who led a fundamental shift in the role of research in shaping policy aimed at bettering the lives of the global poor. In the mid-1990s, Kremer and colleagues began partnering with a local nongovernmental organization in rural Kenya, ICS Africa, to learn which interventions were most effective in improving educational outcomes for children. A conventional approach to this question might have looked at correlations: collecting survey data on schools across Kenya, for example, and asking which inputs – teacher characteristics, measures of access to textbooks and flip charts, provision of school meals – were correlated with students’ learning outcomes.

Kremer and his team took a new and different approach, constructing field experiments to shed light on these questions. Some randomly chosen schools received additional resources, others did not, and – by nature of the randomization – the research team was able to transparently document causal facts directly relevant to education policy. Retrospective correlational studies generally suggested that additional textbooks and flip charts (a form of instruction that was thought to potentially be more accessible to weaker students) improved test scores. However, these effects largely disappeared when tested in randomized experiments.

The methodological approach of randomized experiments was of course not new: When we want to know whether a new drug benefits patients, by default we construct a randomized trial that aims to provide evidence on the drug’s safety and efficacy. By randomly selecting some patients to receive the drug and others to receive a placebo, we can be statistically confident that any differences in health outcomes between the treatment and control groups reflect a causal impact of the drug. But the idea of applying this experimental approach to development policy questions was new, and the consequences of this relatively simple series of studies have been far-reaching.

The substantive conclusions of these initial studies shaped the direction of subsequent research by Banerjee and Duflo in India and elsewhere, leading them to largely focus on interventions aimed at teachers rather than physical inputs. For example, the teaching at the right level (TaRL) approach developed by Indian NGO Pratham, which groups children according to learning level rather than age or grade, was shown in a series of randomized evaluations to consistently improve learning outcomes. The program is estimated to have improved learning opportunities for over 60 million students in India and Africa.

The burgeoning field of experimental evaluation also created fresh opportunities for young researchers. In another field experiment in Kenya, then–PhD student Ted Miguel collaborated with his advisor (Kremer) to investigate the effects of providing deworming pills for parasitic infections. Their work documented that deworming generated large benefits to children in rural Kenyan primary schools – a more than seven-percentage-point increase in school attendance, equivalent to a one quarter reduction in total school absenteeism – but also that parents were quite sensitive to the costs of deworming pills.

This finding, and follow-up work in the area, has shaped subsequent policy including recommendations by the World Health Organization and nonprofit evaluator GiveWell, which routed a large amount of philanthropic funding to deworming work. And the study was an important career step for Miguel, who subsequently founded the leading West Coast hub for development economics, the Center for Effective Global Action at UC Berkeley. More generally, Banerjee, Duflo, and Kremer trained a number of students who themselves have gone on to be leaders in the field of economics.

Second, this impact can be hugely amplified by institutional investments that build out a new ecosystem around the initial research entrepreneurs.

The impact that Banerjee, Duflo, Kremer, and their colleagues had through their personal research and mentorship was hugely amplified by institutional investments they and others made to deepen and broaden the scope and impact of this agenda beyond just these individuals and their students.

Perhaps most notable in this amplification was the establishment of J-PAL – the Jameel Poverty Action Lab – by Banerjee and Duflo together with Sendhil Mullainathan. Over the last two decades, J-PAL has championed the value of experimental methods for tackling policy challenges, and developed initiatives to address issues from climate change to gender and economic agency with timely, policy-relevant research. For example, evidence from a series of randomized evaluations by J-PAL affiliated researchers helped shift the global debate in favor of providing insecticide-treated bed nets for free, rather than charging a nominal fee to (so the argument went) screen out people who wouldn’t use them and increase the likelihood that those who bought bed nets would actually use them.

J-PAL supports a network of academics in conducting randomized experiments aimed at reducing global poverty. Although J-PAL’s impact is difficult to quantify, it estimates that more than 540 million people have been reached by programs that were scaled up after having been evaluated by J-PAL-affiliated researchers. And the footprint of this experimental approach to poverty reduction extends well beyond J-PAL affiliates, and beyond the academy. Organizations such as the nonprofit IDinsight have been created to provide complementary support for research and randomized evaluations beyond those supported by academics alone.

As the body of credible evidence expanded, this in turn motivated the creation of new organizations specialized in taking effective interventions to greater scale. Evidence Action, for example, provides dispensers for safe water and deworming treatments to hundreds of millions, based on the evidence that both are cost-effective ways of relieving the burden of poverty. Research also played a key role in increasing GiveWell’s spending on water treatment interventions after empirical evidence suggested that practical interventions could substantially reduce mortality.

GiveWell incubation grants were another novel approach, providing grants to organizations that might usefully partner with academic researchers in trying to integrate research into operationalizing and scaling effective interventions. And Kremer founded USAID’s Development Innovation Ventures (DIV), which provides grant funding in a tiered approach with scope to fund both the creation of initial evidence of effectiveness and the scale-up of interventions shown to work well at small scales: stage 1 pilot grants (up to $200,000) to test new ideas; stage 2 grants (up to $1,500,000) to support rigorous testing to determine impact or market viability; stage 3 grants (up to $15,000,000) to transition proven approaches to scale in new contexts or geographies; and evidence grants (up to $1,500,000) to support impact evaluations in cases where more information on impact and cost-effectiveness is needed.

Lessons from the example of cash transfers

Rigorous scientific evidence is rarely more important than when a new idea is seen as risky – even crazy. People will often agree in principle that risk-taking should be encouraged, but in practice the incentives often point the other way. Risk-takers in large organizations receive little of the upside benefit if their ideas pan out, and bear much of the downside risk, the embarrassment and criticism, if they do not. As Caleb Watney has argued, research and experimentation can get us out of this equilibrium by providing value-neutral justifications for deviations from the status quo.

As an example, cash transfers to people living in extreme poverty were once perceived as a dubious or even crazy idea. But the adoption of the rigorous experimental methods championed by Banerjee, Duflo, Kremer, and their colleagues played a key role in reversing that perception.

The first wave of cash transfer programs was conditional, meaning that households had to meet certain conditions – children attending school, or completing routine medical visits – to receive them. Families were then free to use the money as they wished. In 1997 the government of Mexico introduced a major new conditional cash transfer scheme called Progresa, and – crucially – made the decision to embed an experimental evaluation in its rollout. For the evaluation, 506 rural communities from 7 Mexican states were selected, with 320 randomly assigned to receive benefits immediately and 186 to receive benefits later. By comparing the early recipients with the later recipients during the 18-month period before the later recipients started receiving benefits, this randomized variation demonstrated meaningful impacts of the conditional cash transfer scheme not just on the outcomes directly incentivized by the program (increased school enrollment and routine medical visits), but also on a broader set of educational and health outcomes. These results contributed to the adoption of the conditional approach by a number of other countries in South America and beyond.

But fully unconditional transfers, not contingent on any particular ‘good’ behavior, were a bridge too far for many. Enter GiveDirectly. As the experimental revolution in development economics was taking off, a group of economics graduate students, including one of us (Niehaus), saw an opportunity for a new organization to bring large-scale unconditional transfers to a broader audience. New technological platforms for money transfer—such as M-Pesa’s mobile money system in countries such as Kenya and Tanzania—would address concerns about the basic feasibility of doing this. But that left the more fundamental concern about desirability: was it actually a good idea? Or, as The New York Times wrote in their first piece on the effort, ‘Is It Nuts to Give to the Poor without Strings Attached?’

To help address such skepticism, GiveDirectly wove randomized evaluations into the fabric of its organization. Some of the first transfers it delivered were evaluated as part of a widely cited randomized trial. In total GiveDirectly has now conducted 20 such trials, including a first-of-its-kind study documenting large aggregate effects of transfers on local economies, with each dollar transferred expanding the local economy by $2.40. This research, along with external studies, helped earn GiveDirectly a top-charity recommendation from GiveWell along with several key initial GiveWell grants that helped to validate its approach.

The results of these randomized evaluations have convinced donors as well. GiveDirectly has now raised close to one billion dollars and delivered transfers in 11 countries. While harder to measure, there is also a widespread perception that unconditional transfers have been legitimized as an important tool for addressing global poverty.

Building a metascience ecosystem

The scientific research process can be hard to observe – and even harder to draw rigorous conclusions about – when studied from an outside perspective.

In terms of data, we can observe funded grants, published papers, and patent applications in publicly available sources. But very rarely do we have systematic ways of observing who applied for grants but wasn’t funded, which research projects were attempted but never published, and which findings were never brought to university technology transfer offices to be submitted in a patent application. Little or no data is publicly available to document the human side of science: which students were mentored and trained, and what their subsequent career paths were. These data limitations sharply limit which empirical research questions can be asked.

Just as critically, only in rare circumstances does observational data allow researchers to draw causal conclusions. As in the Kenyan primary school example discussed above, correlations in observational data often don’t hold up when tested in an experiment.

Our view is that – as in the case of international development funding – research on the scientific process is most productive when done hand in hand with the organizations that fund and support science. By partnering with science funders, researchers can gain a deeper understanding of the relevant institutions, and better understand which questions would be most useful to ask and (try to) answer. Partnerships with science funders can also make it possible to observe unsuccessful applicants and – at least in some cases – to isolate variation in the data that as good as randomly assigns resources like grants to some applicants but not others. For example, Pierre Azoulay and coauthors use internal NIH administrative data together with a detailed understanding of idiosyncrasies in the rules governing NIH peer review to quasi-experimentally estimate that $10 million in NIH funding leads to an additional 2.3 patents. Their work would not have been possible without relying on internal NIH administrative records documenting researchers who applied for but did not receive funding for some of their NIH grant applications.

Moreover, partnerships with science funders can sometimes offer opportunities for prospectively designed randomized evaluations on questions of interest to science funders. For example, many science funders are moving to systems of double-blind peer review out of concern that single-blind peer review (that is, a system in which reviewers observe the identities of applicants) may be biased against some groups. But evidence from some other settings, such as a French labor market policy, has suggested that anonymized review can sometimes hurt the very groups such policies intend to help. Randomized evaluations such as this one comparing scores of grant applications across single- versus double-blind peer review can start to build a base of evidence on this question in the specific context of grant review.

There are exciting developments in such partnerships taking place today, throughout the ecosystem, that give us tremendous reason for optimism: We seem to be at a genuine point of inflection and opportunity. In the philanthropic and nonprofit space, we are inspired by the Gordon and Betty Moore Foundation’s commitment to ‘scientifically sound philanthropy’, which has led them to incorporate opportunities for measurement, evaluation, and experimentation into their science funding. And we are inspired by the vision of efforts like Research Theory, which aims to support basic research on how to improve the culture and management of scientific labs, with the eventual goal of – like USAID DIV and Evidence Action – scaling best practices.

Government agencies are making similarly exciting progress. In the case of cash transfers, USAID – the primary US foreign aid agency – orchestrated some of the key studies in partnership with GiveDirectly, with USAID putting its own programs to the test in a series of path-setting experimental trials. This took institutional courage and commitment, as well as internal champions to make the case for the value of experimentation (in this case, individuals like former USAID official Daniel Handel).

In the field of metascience, a number of government agencies are making meaningful progress. We are inspired by a recent randomized evaluation by the US Patent and Trademark Office (USPTO) that explored whether providing additional guidance to pro se applicants – that is, applicants who apply for a patent without a lawyer, who are thought to be disadvantaged in the patent application process – could improve their chances of receiving a patent. The results of the evaluation suggested that the assistance program increased the probability of pro se applicants obtaining a patent across the board, but particularly benefited women applicants and thus reduced the gender gap in patenting. The USPTO is now launching an internal research lab aimed at routinely conducting randomized evaluations to improve the effectiveness and efficiency of the patent examination process. We are also excited by the opportunities afforded by new public sector agencies such as ARPA-H and the National Science Foundation’s new Directorate for Technology, Innovation and Partnerships (TIP), which seem genuinely open to opportunities to build data and research directly into the fabric of these new organizations.

In many cases these efforts are creating or highlighting opportunities for experiments: A/B tests of old and new approaches, side by side, to determine which works best. Doing these experiments well will take a collaborative effort. As with the example of international development, we need to bring together institutional insight and experience from the entities that support science with the best thinking about how to measure scientific progress and expertise in designing rigorous and relevant experiments in the field. To this end, we recently launched the Science for Progress Initiative (SfPI) at J-PAL, with generous financial support from Open Philanthropy, Schmidt Futures, and the Alfred P. Sloan Foundation.

Building on J-PAL’s successful model, our initiative aims to provide financial and technical support for rigorous experimental evaluations of alternative approaches to supporting science. We anticipate that SFPI will fund partnerships between academic researchers and science funders, as well as matchmaking to help form these partnerships in the first place. One critical issue is that – precisely because the field is so nascent – the number of academics with expertise in both the economics of science and in the design of randomized evaluations is currently quite small. Our initiative is explicitly designed to address this challenge and make it as easy as possible for non-experimentalists to run their first experiments, for example by covering the cost of technical support from J-PAL staff on experimental design and implementation.

In some cases there is much to be learned even just from purely descriptive data collection and analysis. One idea is to allocate some proportion of science funding on the basis of ‘golden tickets’, or strong unilateral endorsements by a reviewer that are sufficient on their own to ensure that a grant proposal gets funded (as opposed to requiring consensus-driven aggregates of reviewer scores). We could evaluate the merits of such an approach by conducting a large-scale experiment, where some proposals are evaluated under a golden ticket regime and others using a more conventional process. But we could also begin by doing something far simpler: add a question to reviewer rubrics within the conventional process asking where they would allocate a golden ticket if they had one. The resulting data would give us a good idea how the distribution of funded people and projects under a golden ticket regime would compare to that under the status quo system.

As activities like these multiply – with more institutions and more researchers seeking collaboration in the interest of improving science – there is also a need for structures to facilitate such connections. This broader convening and coordinating role is being played by actors like the Institute for Progress (IFP), a nonpartisan think tank based in Washington, DC, with an aligned vision for the broader agenda of making scientific research more evidence-based. For example, the IFP and the Federation for American Scientists recently co-launched the Metascience Working Group, which aims to connect academics and policy practitioners to promote research in this area. We are – in our personal capacities – collaborating closely with the Metascience Working Group and with Matt Clancy, Alec Stapp, and particularly Caleb Watney at the IFP, anticipating that (among other benefits) some of the collaborations that result may develop into randomized evaluations suitable for the J-PAL initiative to support.

Looking forward

Long-established fields of endeavor can change, revitalizing themselves using data, evidence, and experimentation to create a virtuous cycle of self-improvement. The field of international development provides one such example. Our hope and belief is that the field of scientific research will be next. Rather than basing the way we fund science on custom or anecdote, we can shift to a norm in which science funders build research into the way they support research, allowing them to measure and improve their own effectiveness over time.